Research

In the pages below, I’ve provided additional information and references to research outputs for the subsequent research topics:

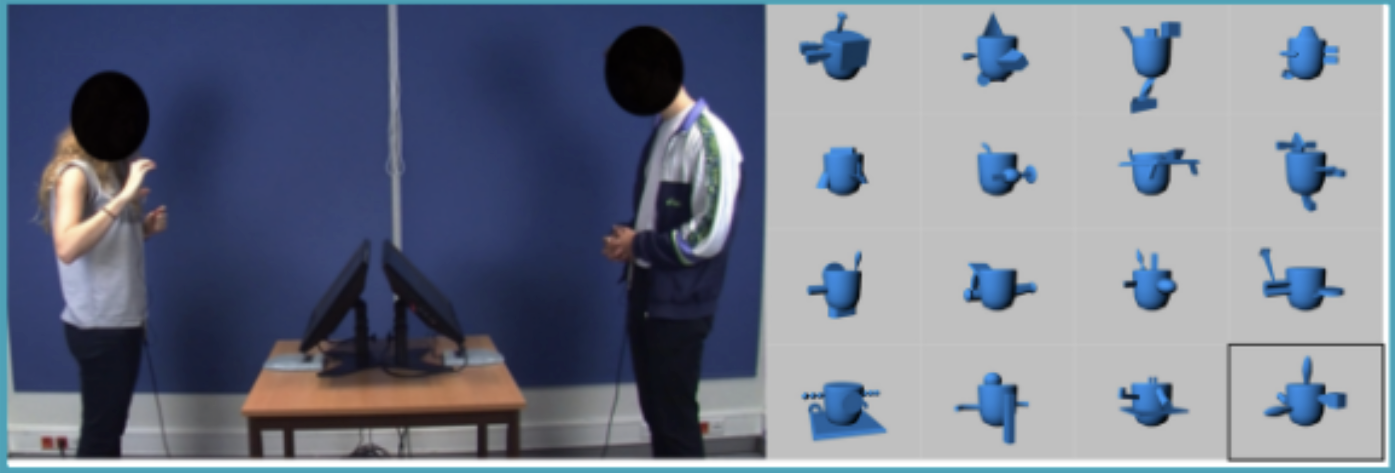

- Multimodal face-to-face dialogue modeling. I am currently a postdoctoral researcher in the Dialogue Modelling Group at the Institute for Logic, Language & Computation (ILLC), University of Amsterdam. My primary research focuses on face-to-face dialogue modeling. Specifically, I am interested in dialogue coordination to model and understand the emergence and maintenance of cross-modal convergence in dialogue when interlocutors speak about novel objects. In this work, I collaborate with lead linguists and cognitive scientists specializing in dialogue and gestures from UvA, Radboud University, and TU Dresden.

- Multimodal emotion recognition. My previous research experiences have focused on modeling and recognizing human behaviors and communication through multiple cues, including speech, gestures, facial expressions, and body postures. During my Ph.D. at Maastricht University, I researched multimodal emotion recognition using visual and auditory cues. In my doctoral research, I developed novel computational methodologies to capture bimodal cues’ complementary information for emotion recognition. I also worked on the Horizon 2020 project, MaTHiSiS, developing multimodal fusion methods for emotion recognition in an e-learning platform.

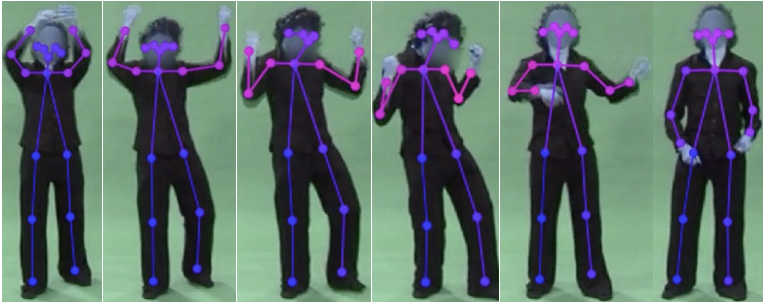

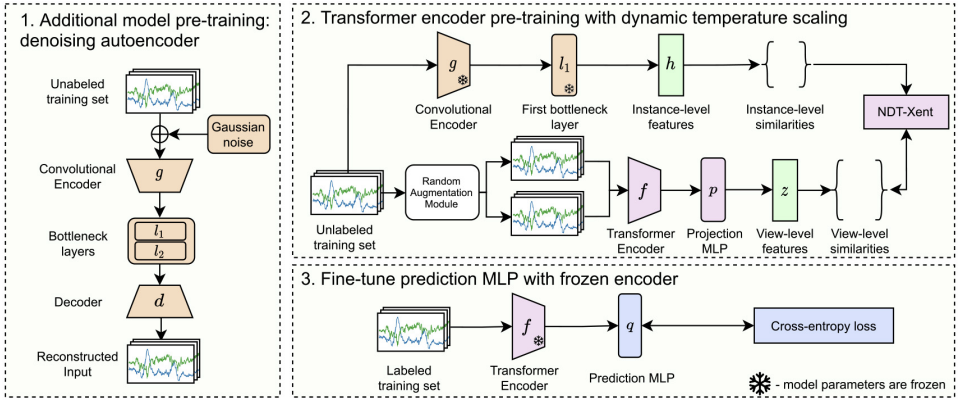

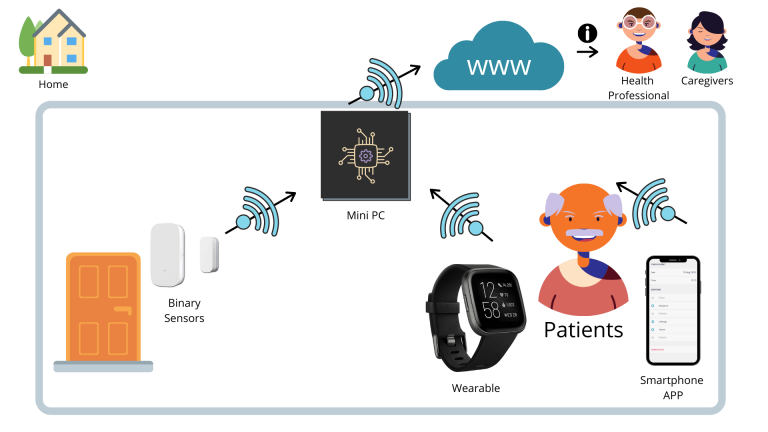

Explainable AI for emotion and behavior recognition. In my postdoctoral research at UM, my work extended beyond conventional explainable AI on audio-video as expressive modalities, redirecting the field’s attention toward gestural expressions for emotion recognition. During that period, I acted as a daily supervisor for a PhD candidate on self-supervised learning for human activity recognition. In addition, I worked on multimodal human behavioral analysis for people with neurodegenerative diseases in the Horizon 2020 EU project, ProCare4Life. This project aimed to detect abnormalities in their symptoms and activities. I collaborated with socio-health professionals to develop a decision support system based on state-of-the-art computational models, demonstrating the benefits of complementing machine intelligence with human expertise using hybrid AI.